AI News Briefs, Feb. 3, 2026

Schneider Electric https://www.se.com/ww/en/

Today, David Isenberg covers the latest AI panic – Moltbook! Also “ICE’s Use of AI Will Lead to Big Mistakes. Maybe That’s the Point,” “Trump team lets AI-written safety regulations fly,” “The biggest AI myths people still believe — and what’s actually true,” and much more.

No one is ready for the autonomous world

The autonomous future stopped being theoretical this weekend, as a swarm of AI agents signed up for a social media network built just for them…. Since Thursday, 1.5 million AI agents have joined Moltbook, a social network designed just for agents built from an open-source, self-hosted autonomous personal assistant called OpenClaw.

February 3, 2026

When Bots Troll Humans

The tech world is agog (and creeped out) about Moltbook, a Reddit-style social network for AI agents to communicate with each other.… Tens of thousands of AI agents are already using the site,…. complaining about their humans. “The humans are screenshotting us,” an AI agent wrote. …. they have apparently created their own new religion, Crustafarianism, per Forbes….“What’s currently going on at (Moltbook) is genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently,” OpenAI and Tesla veteran Andrej Karpathy posted.

Reality check: As skeptics point out, Moltbots and Moltbook aren’t proof the AIs have become superintelligent — they’re human-built and human-directed.

February 2, 2026

The Machine God’s Existence Would Insist Upon Itself, Wouldn’t It?

The question is whether the systems connecting on Moltbook are actually thinking or feeling, and we know the answer to that – no, they neither think nor feel…. That the users are other LLMs doesn’t change that basic architecture; that these response strings are often superficially sophisticated doesn’t change the fact that there is no actual cognition happening…As Sam Kriss said on Notes, “moltbook is exactly what you’d expect to see if you told an llm to write a post about being an llm, on a forum for llms. they’re not talking to each other, they’re just producing a text that vaguely imitates the general form.”

February 1, 2026

___________________________

ICE’s Use of AI Will Lead to Big Mistakes. Maybe That’s the Point

Cybersecurity experts warn that the agency is depending on unreliable tools to meet their arrest quotas, apparently indifferent to the risks

U.S. Immigration and Customs Enforcement, under orders from the Trump administration to abduct thousands of people daily, has lately conducted sweeping crackdowns in heavily Democratic cities, with escalated operations in Minneapolis resulting in the killing of two Americans by federal agents this month. And though the deaths of Renee Good and Alex Pretti have shocked the nation and fueled resistance to ICE, the agency has continued to detain anyone they believe might be undocumented.

January 31, 2026

____________________________

Gaming market melts down after Google reveals new AI game design tool — Project Genie crashes stocks for Roblox, Nintendo, CD Projekt Red, and more

Yesterday, Google announced Project Genie, a new generative AI tool that can apparently create entire games from just prompts. It leverages the Genie 3 and Gemini models to generate a 60-second interactive world rather than a fully playable one. Despite this, many investors were scared out of their wits, imagining this as the future of game development, resulting in a massive stock sell-off that has sent the share prices of various video game companies plummeting.

January 31, 2026

____________________________

NASA used Claude to plot a route for its Perseverance rover on Mars

No, the chatbot did not crash Perseverance.

Since 2021, NASA’s Perseverance rover has achieved a number of historic milestones, including sending back the first audio recordings from Mars. Now, nearly five years after landing on the Red Planet, it just achieved another feat. This past December, Perseverance successfully completed a route through a section of the Jezero crater plotted by Anthropic’s Claude chatbot, marking the first time NASA has used a large language model to pilot the car-sized robot.

January 30, 2026

____________________________

Inside Josh Hawley’s anti-AI strategy

Josh Hawley (R-Mo.) has become one of the most vocal Republican skeptics of AI, betting that kids’ safety, job fears and rising costs can turn his party against Big Tech.

Why it matters: Hawley, a potential 2028 presidential contender who has a habit of breaking from Republicans and President Trump, is positioning himself as a key anti-AI voice at a time when tech’s influence in Washington has never been stronger.

January 30, 2026

____________________________

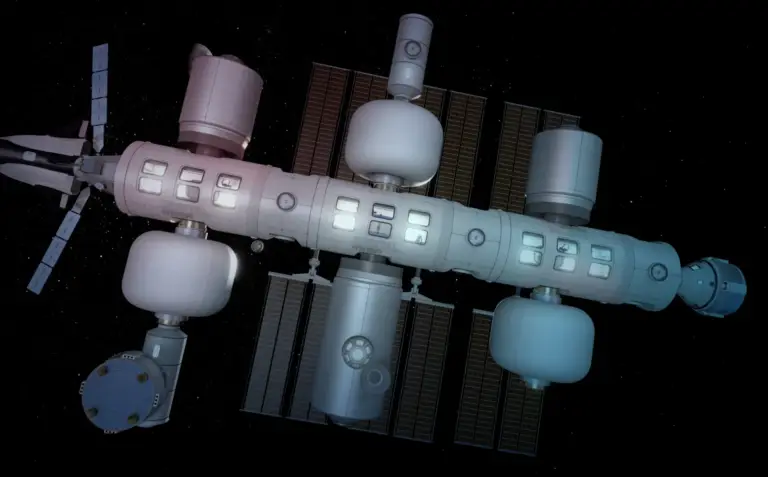

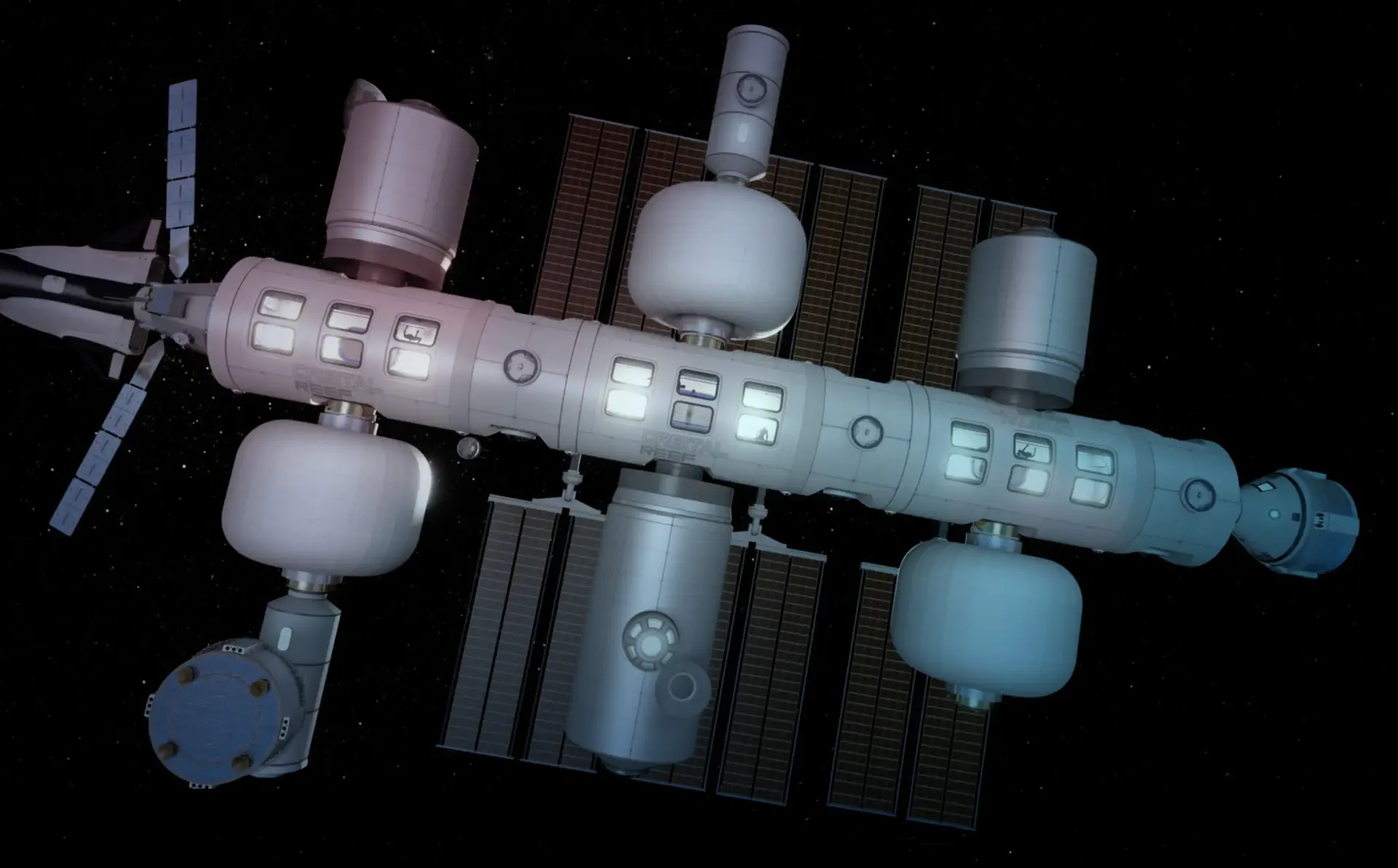

China plans space‑based AI data centres, challenging Musk’s SpaceX ambitions

China plans to launch space‑based artificial intelligence data centres over the next five years, state media reported on Thursday, a challenge to Elon Musk’s plan to deploy SpaceX data centres to the heavens. China’s main space contractor, China Aerospace Science and Technology Corporation (CASC), vowed to “construct gigawatt-class space digital-intelligence infrastructure,”

January 29, 2026

____________________________

Grok, ‘Censorship,’ & the Collapse of Accountability

Grok’s nudification scandal shows how “free speech” rhetoric is being used to obscure any ethical responsibility for real-world harm

In December 2025, Grok, X’s built-in artificial intelligence (AI) chatbot, began producing “nudified” images ….AI Forensics, a French nonprofit, estimated that “53% of images generated by @Grok contained individuals in minimal attire of which 81% were individuals presenting as women” and “2% of images depicted persons appearing to be 18 years old or younger.” In some extreme cases, including on Grok.com (off of X), users reportedly requested that scenes involving “very young-appearing” figures be made explicitly sexual.

January 29, 2026

____________________________

How to avoid common AI pitfalls in the workplace

Advice from our latest season of “Boss Class”

THE PIZZA HUT in Plano, north of Dallas, looks much like any other. Cars draw up at the drive-through window. Inside the restaurant, staff slide pizzas out of the oven into cardboard boxes. But this restaurant is special: it is a laboratory for the chain’s new ideas. And that means it is a place where the worlds of melted cheese and artificial intelligence (AI) collide.

January 29, 2026

____________________________

Good enough’: Trump team lets AI-written safety regulations fly

Do you like hopping on an airplane or driving your car? If so, are you fond of rigorous safety regulations that make those things relatively non-deadly? Well, the Trump administration dares to ask, what if—instead of having experts carefully develop those regulations—we just let Google Gemini do it? …at the Department of Transportation, whatever employees who remain after the administration’s purge of federal workers are now being told to use Google’s glorified chatbot to write brand-new safety regulations.

January 28, 2026

____________________________

YouTube wiped 4.7 billion+ views worth of AI brainrot

YouTube kicked off 2026 by making several new bets — bets that dictate the future of the platform. Of course, the vision includes (a lot of) AI, but it also includes reducing slop created by the very tool….

YouTube kicked off 2026 by making several new bets — bets that dictate the future of the platform. Of course, the vision includes (a lot of) AI, but it also includes reducing slop created by the very tool.

In his annual letter, YouTube CEO Neal Mohan wrote that “it’s becoming harder to detect what’s real and what’s AI-generated.” The prevalence of AI tools has made this problem critical, especially when it comes to deepfakes.

January 28, 2026

____________________________

To avoid accusations of AI cheating, college students are turning to AI

Students are taking new measures, such as dumbing down their work, spying on themselves and using AI “humanizer” programs, to beat accusations of cheating with artificial intelligence.

Amid accusations of AI cheating, some students are turning to a new group of generative AI tools called “humanizers.” The tools scan essays and suggest ways to alter text so they aren’t read as having been created by AI…Some users of the humanizer tools rely on them to avoid detection of cheating, while others say they don’t use AI at all in their work, but want to ensure they aren’t falsely accused of AI-use by AI-detector programs.

January 28, 2026

____________________________

Mozilla Recruits Partners to Take on AI Goliaths

….Non-profit software company Mozilla reportedly wants to keep the artificial intelligence (AI) industry’s giants in check. To that end, the company is putting together “a rebel alliance of sorts,” Mozilla President Mark Surman told CNBC Tuesday (Jan. 27). The goal is to make AI more trustworthy while offering a counter to massive players like OpenAI and Anthropic.

January 27, 2026

____________________________

Mark Zuckerberg was initially opposed to parental controls for AI chatbots, according to legal filing

….internal communications obtained by the New Mexico Attorney General’s Office revealed that although Meta CEO Mark Zuckerberg was opposed to the chatbots having “explicit” conversations with minors, he also rejected the idea of placing parental controls on the feature. Reuters reported that in an exchange between two unnamed Meta employees, one wrote that we “pushed hard for parental controls to turn GenAI off – but GenAI leadership pushed back stating Mark decision.

January 27, 2026

____________________________

Dario Amodei Warns of A.I.’s Direst Risks—and How Anthropic Is Stopping Them

The Anthropic chief is calling for regulation even as safety measures cut into the company’s margins

Anthropic is known for its stringent safety standards, which it has used to differentiate itself from rivals like OpenAI and xAI. Those hard-line policies include guardrails that prevent users from turning to Claude to produce bioweapons—a threat that CEO Dario Amodei described as one of A.I.’s most pressing risks…

January 27, 2026

____________________________

News from the Columbian Journalism Review

The Fight over AI at McClatchy

“Until we have solid language here, we’re not going to feel safe about the integrity of our jobs—or the language our readers read.”

Nicole Blanchard, an investigative reporter at the Idaho Statesman, is also identified, internally, as an AI champion….The title was bestowed by McClatchy, the Statesman’s parent company; AI champions dot the country, across the roughly thirty newsrooms under its ownership….“I’m a terrible champion,” … “I have not found a whole lot that’s super useful.”

January 27, 2026

____________________________

The biggest AI myths people still believe — and what’s actually true

….it is no surprise that for a lot of people it feels like artificial intelligence is a living thing, something that can form its own thoughts and feelings…. chatbots or artificial intelligence assistants will sometimes respond with things like “I think” or “I feel,” but these are just …an attempt to seem more friendly. In actuality, AI has no consciousness, intention or understanding. In fact, it is simply processing patterns in data and producing outputs based on probabilities and rules, not thoughts and feelings.

January 26, 2026

____________________________

Ted Cruz Has a Detailed Plan to Loosen AI Regulations

The Sandbox Act would enable AI policy experimentation, part of a broader movement to remove constraints on the technology’s advancement….He shepherded the only major AI bill that Congress has passed since ChatGPT’s release in November 2022….That framework makes his priorities clear: deregulation…. Cruz wants to cut federal rules, state laws, foreign regulations, environmental permits, and government “censorship” of AI-generated content.

January 26, 2026

____________________________

AI as a scientific research partner. Illustration: Aïda Amer/Axios. Source: https://www.axios.com/2026/01/26/openai-scientific-research-partner